UBR-1 on ROS2 (Part 3)

16 Jun 2020ubr1 robots ros2 In my last post I finished porting the

robot_controllers package to ROS2. The last piece of hardware to

support the UBR-1 in ROS2 was the head camera, and it turned out to be the most

challenging part.

Note: I’ve created a new

Porting openni2_camera

The head camera in the UBR-1 is a vintage 2013-era Primesense Carmine. Getting it

to run in ROS2 meant porting the openni2_camera package.

In ROS1 the camera driver was implemented as a nodelet for efficiency when pairing

it with components from image_pipeline.

Nodelets have been replaced by components in ROS2. The setup for a

ROS2 component is actually quite simple and this is a part of ROS2 that is

very well documented:

An aside: most components are derived from rclcpp::Node. A number of

calls in the rclcpp API require that you pass an rclcpp::Node::SharedPtr.

No problem, Node derives from

std::enable_shared_from_this,

so you can call shared_from_this() to get the pointer. BUT - beware,

this is only works if the instance was created as a shared pointer (not a problem

when using the rclcpp ComponentManager to load your component), AND after the object is fully constructed.

I spent a good 20-30 minutes trying to figure out whey my camera driver kept

throwing a bad_weak_ptr - even reading through the code for

ComponentManager, before realizing it was happening in a function being called from the

constructor!

The next challenge in porting openni2_camera is that ROS2 doesn’t yet support subscriber connect callbacks. These are frequently used in ROS1 to implement lazy publishers. Basically, when creating a publisher you would register a callback which gets called whenever someone subscribes or unsubscribes from your topic. This way you can decide whether to actually do heavy work - or in the case of a driver, possibly start or stop data transmission from the hardware.

In the case of openni2_camera - it is actually extremely important to lazy publish. There are multiple

camera streams, but you cannot actually stream them all at once so you NEED lazy

publishers. I ended up working around this by creating a timer which calls what would

be the subscriber connect callback every second. It’s a bit of overhead, but way less than

publishing lots of unneeded data. Along the way, I found out that the count_subscribers

function in rclcpp doesn’t respect remapping, and

so the only way to properly get the subscriber count is to call get_subscription_count

on the publisher. Since the openni2_camera uses image_transport this meant tracking

down that getNumSubscribers on the the camera publisher class wasn’t implemented

yet (I think the port predates get_subscription_count being added). If you want to try

out the openni2_camera package in ROS2, for now you’ll need to use this

PR that I created (but hasn’t been

merged yet).

Loading Components

The openni2_camera driver only outputs the raw RGB camera and raw depth camera streams. To get

things like sensor_msgs/PointCloud2, we need to construct pipelines using

the components of depth_image_proc. In ROS1, these were implemented as nodelets.

At some point they were ported to ROS2 as components, but not quite correctly (or maybe they

were correct when they were ported). A pair of

quick

PRs

got them working again as components, and got the parameters working.

The next step was to setup a launch file that loaded the camera driver, some components

to rectify the RGB and Depth images, and a component to create a point cloud. In ROS1, this

existed as the packages rgbd_launch and openni2_launch. Due to

the lack of lazy publishers and subscribers, you really can’t build the same lazy graph

that those packages defined right now. So I built a minimal graph of sensor processing

that I needed in a single launch file.

With this prepared, I could run the launch file and

ros2 topic hz /head_camera/depth_registered/points showed it was publishing!

ROS2 Parameters

Let’s back up and talk about that depth_image_proc parameter PR for a moment.

Starting with ROS2 Dashing, parameters needed

to be declared. If you try to access an undeclared parameter, you get an error, unless you

use get_parameter_or - which won’t throw an error. Unfortunately, that means code that used

get_parameter_or and was written pre-Dashing might just not actually load parameters correctly.

One of the big benefits of declaring all parameters is that you can do:

ros2 param list

And get all the parameters. This is actually huge - as a maintainer of navigation in ROS1, I have had more than a handful of occasions when I learned of a parameter that was undocumented but widely used, and which I didn’t even know existed. ROS2 might lack documentation for things - but the introspection is really nice and can be a work around for missing documentation.

RVIZ Woes

The next step is clearly to look at the point cloud in RVIZ2. Should be super easy, right?

Not so fast. In ROS1, any subscriber could connect to a publisher of the same topic message type. ROS2 introduces Quality of Service settings and there are incompatible matches. RVIZ connected to the topic, but didn’t show any data.

In ROS2 Foxy, you can run ros2 topic info topic_name --verbose and it will print

the QoS information for each publisher and subscriber. This is super helpful - but I unfortunately

didn’t find that until a few days after I was debugging this. As it turns out, depth_image_proc

components publish “best effort” but RVIZ subscribes with “reliable” and this is incompatible.

In Foxy, rosbag is apparently able to deduce the publisher QoS and connect correctly,

I’m planning to look into how that was done and see if I can make RVIZ work a bit better (Update:

If you expand the “Topic” box you can manually change the QoS settings).

While we’re talking about RVIZ2 challenges, I’m also going to note this PR which works around the fact that RVIZ2 is apparently entirely single threaded and the single thread runs everything in the same update loop. Keep that in mind if things aren’t visualizing right, it may not be your code.

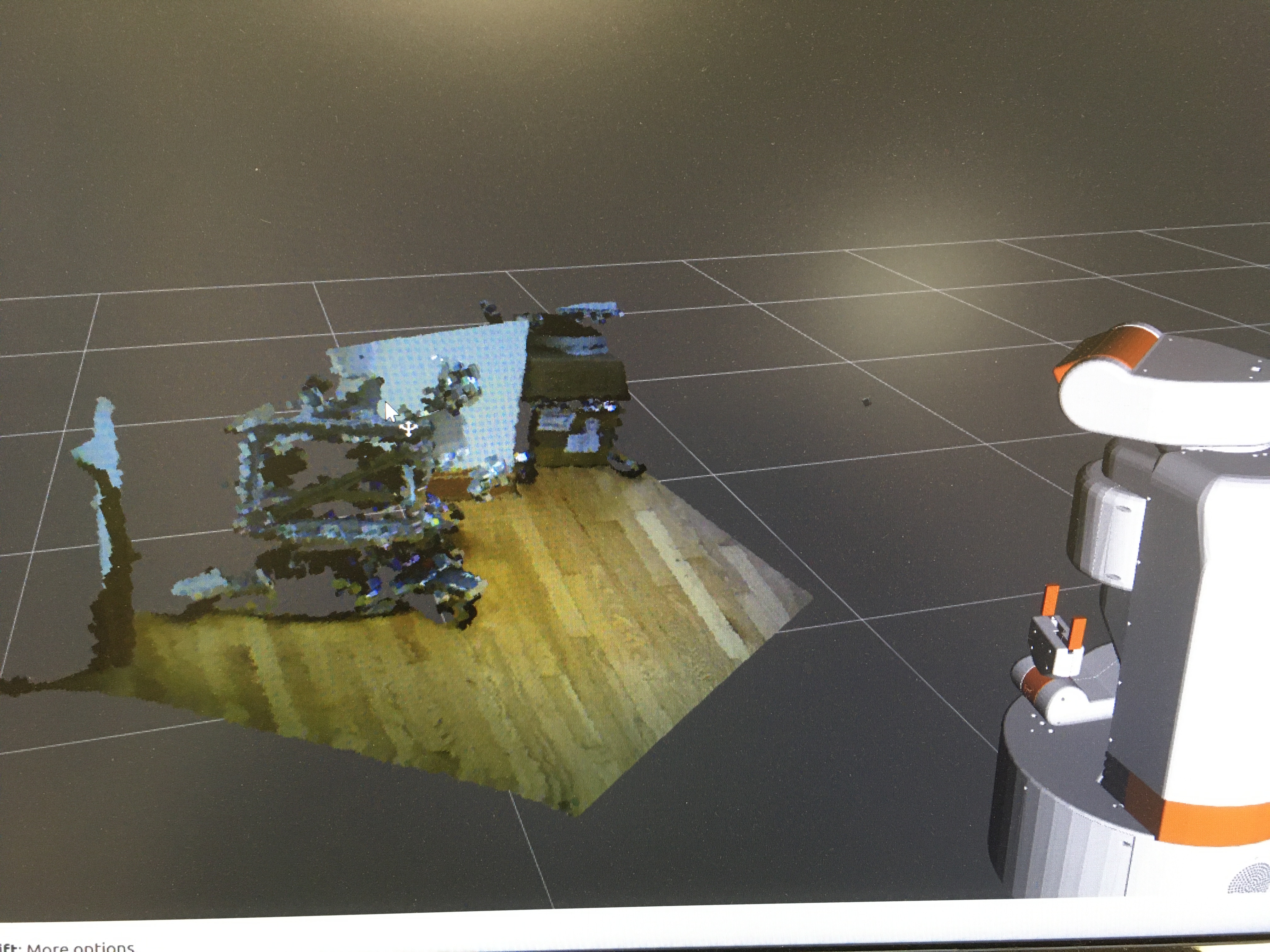

Finally, I got the QoS correct (by hacking depth_image_proc, since it compiles faster) and had my point cloud:

Just kidding, I still had to find out that my timestamps were wrong and message_filters

was filtering out all my messages. Once I fixed that in the openni2_camera package, I got the

view in RVIZ2.

Next Steps

For anyone wanting to try out the openni2_camera package in ROS2, there is more detailed information in the ticket on the openni2_camera package.

I’m continuing to clean up issues in the packages that I’m porting, but my next big step is getting

navigation setup on the UBR-1 in ROS2. That post will probably come a little slower than these

last few have. I’m also working on porting the