22 Oct 2024

ubr1

robots

ros2

Yet another post on ROS2 and the UBR-1. Here are quick links to the previous posts:

Installation

MoveIt2 is still evolving, and the Debian builds seem to be quite out of date most

of the time, so I would suggest doing a source install. I’ve had

good results lately with the main branch building on both Iron and Jazzy. In

addition to the moveit2 repository,

I’m also building the ros2 branch of

moveit_msgs and

moveit_task_constructor from source.

MoveIt2 Setup Assistant

Everything in MoveIt really starts with the MoveIt Setup Assistant (MSA). Unfortunately,

the MSA was one of the last components migrated to ROS 2 (at least partially because launch

files are massively different from ROS 1). While the MSA is still a bit brittle,

it is pretty well documented with a

tutorial

which I followed quite closely.

The MSA basically exports a new ROS 2 package, typically called [robot_name]_moveit.

You can find my ubr1_moveit export

here.

This package includes a custom set of configuration and launch files for the robot

to use MoveIt2. In comparison to the files generated in ROS 1, these end up being a bit

less easy to modify since most of the “launch” file is actually part of the

moveit_config_utils package.

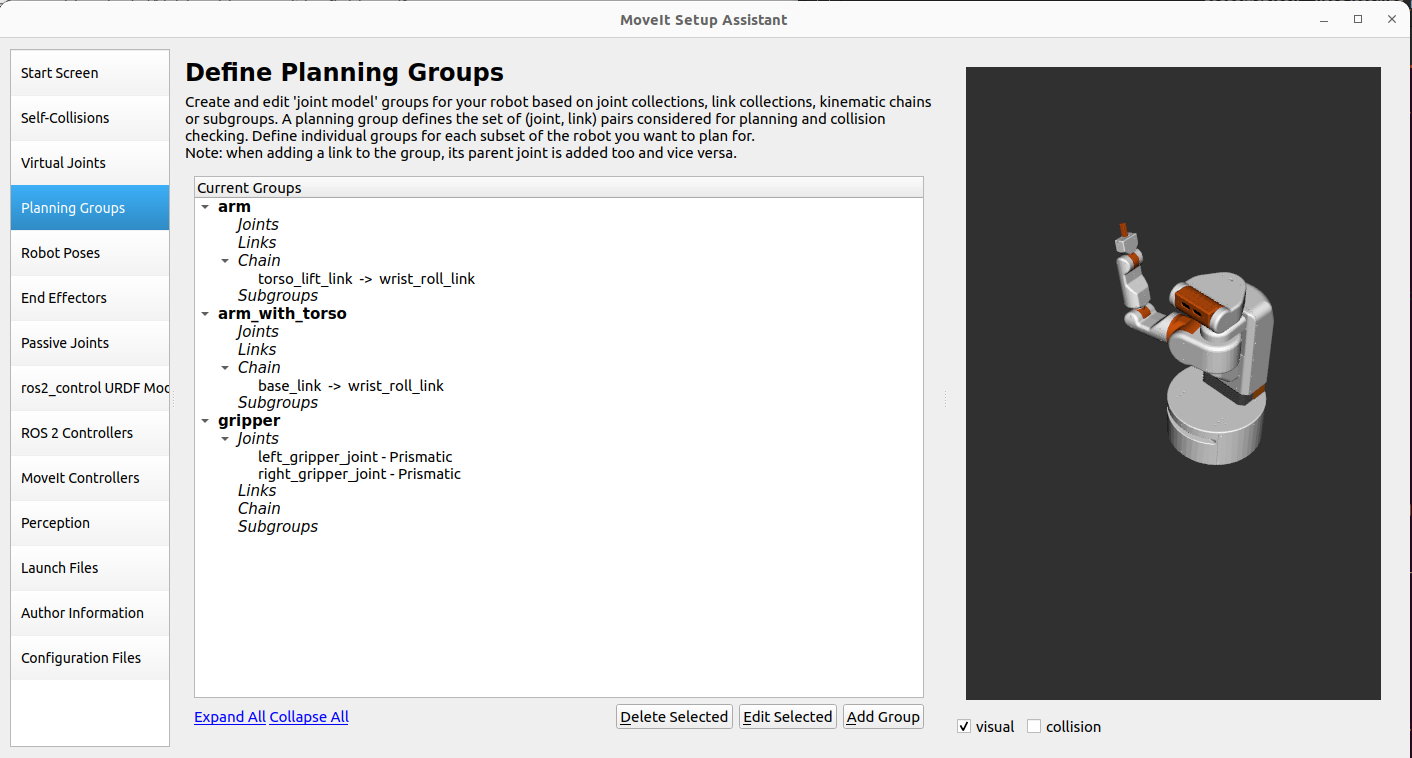

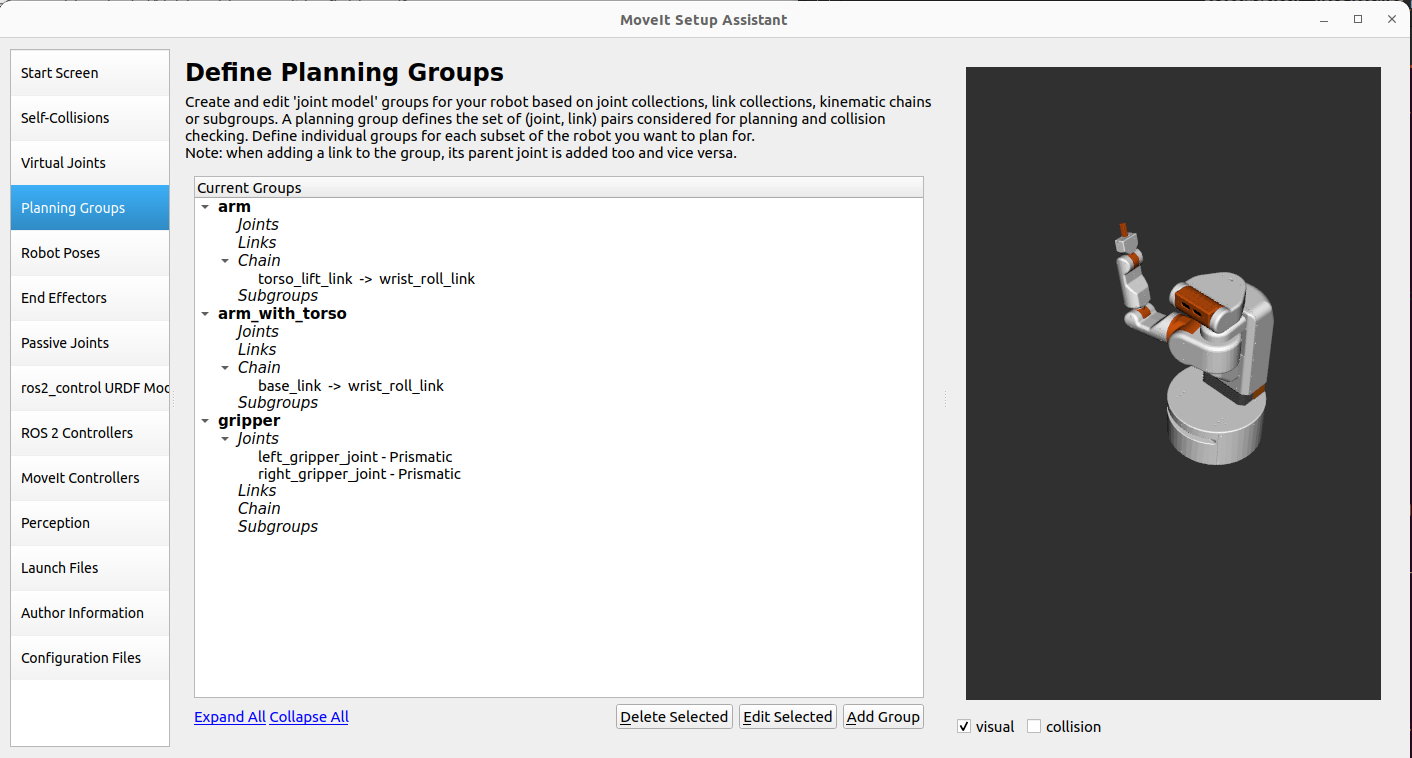

For the UBR-1, I defined three planning groups:

arm - This is defined as the seven joints of the arm and is the usual

planning group. I also defined a ‘ready’ pose that I use for getting the

arm out of the way of the camera.arm_with_torso - This is again defined as joints, but this time including

the torso lift joint. This planning group is occasionally useful if you need

a larger vertical range of motion.gripper - This is defined as the two gripper joints, and no IK solver

is configured for the group. Poses were defined for “open” and “close”.

After first exporting the MSA config, I had make a few minor tweaks. The most notable

is that I had to add acceleration limits to the joints as the Time Optimal Parameterization

now requires acceleration limits. These values were added to the config/joint_limits.yaml

file.

I also had to manually update the collision matrix in the SRDF file to allow collision

between the bellows and the torso/base. I’m not sure why the collision checker in the

MSA didn’t pick up that these are always in collision, but I couldn’t plan without these

collisions being allowed.

Definitely commit any changes to your configuration before re-exporting with the MSA because

it will override/lose some changes in many cases and git diff will help you track what

was lost.

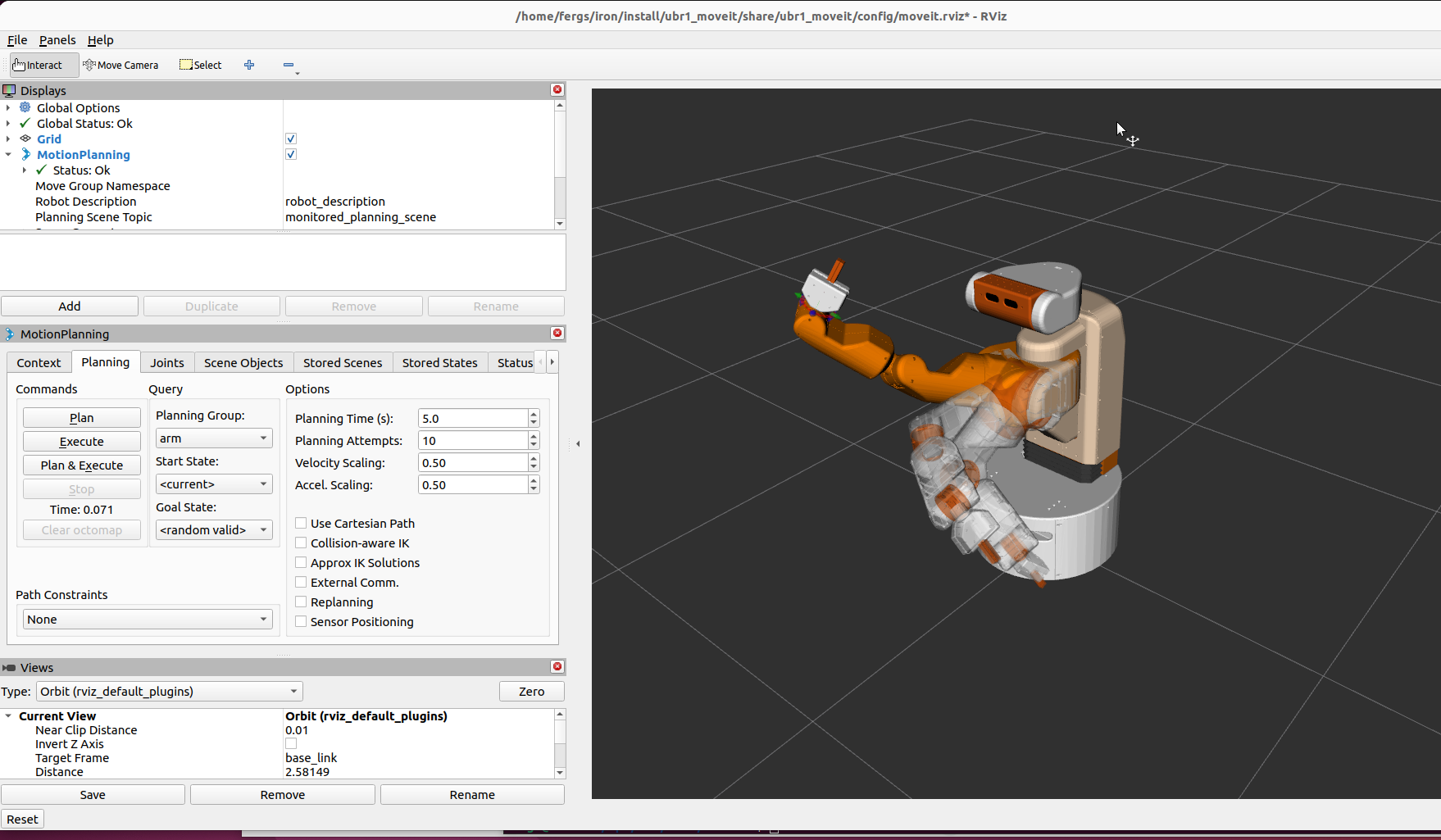

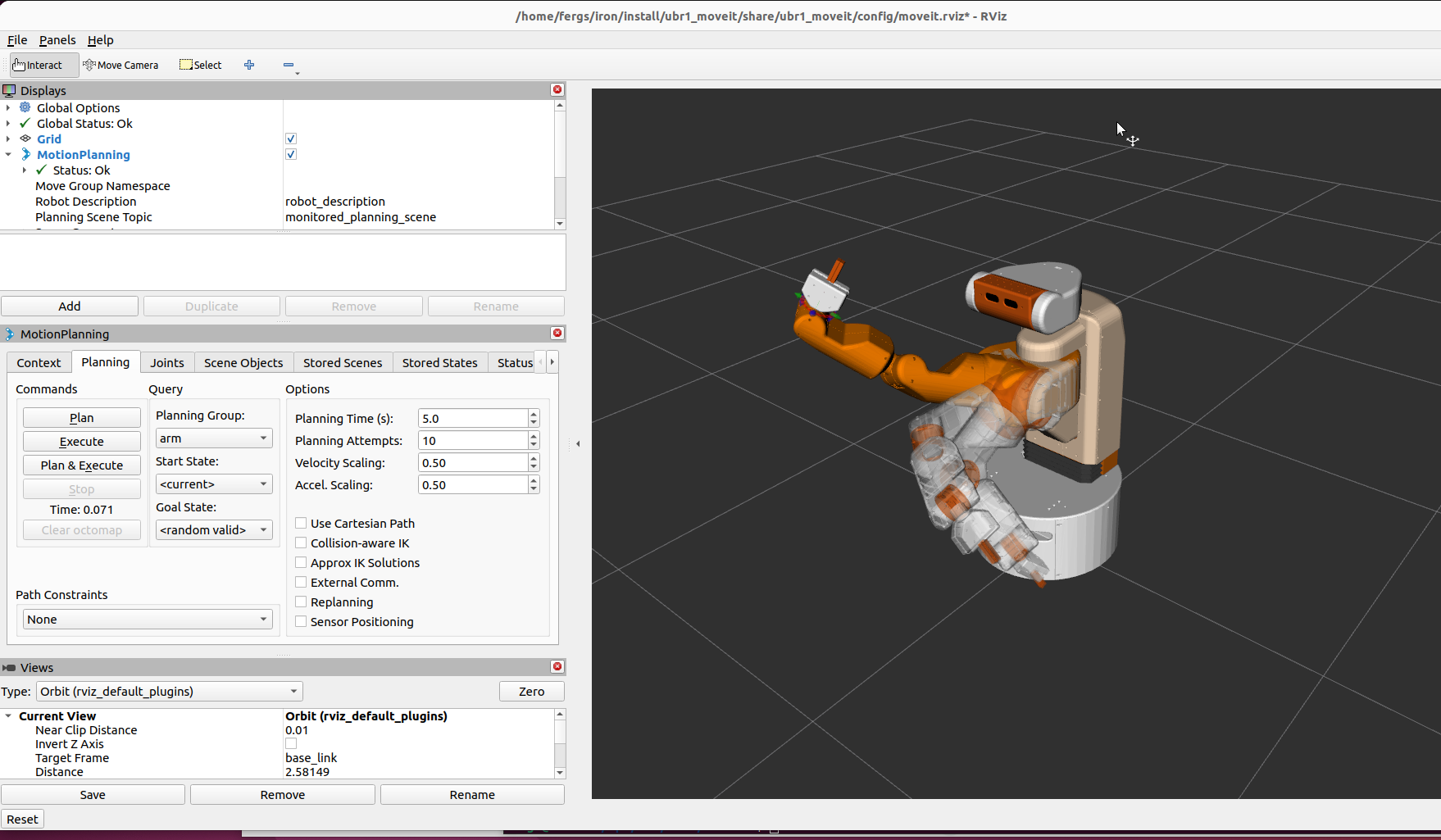

Once we have an export, we can test things using the demo.launch:

ros2 launch ubr1_moveit move_group.launch

Planning worked well but I was unable to execute any motion plans in the demo environment.

I found that because I had two groups using the arm - I needed to switch controllers

using the rqt_controller_manager tool. This isn’t an issue on the real robot

where I use robot_controllers instead of ros2_control, which does automatic

switching of controllers when they receive an action goal.

One thing to keep in mind - when loading the rviz2 plugin for MoveIt2 - always use the

RVIZ2 launch file generated by the MSA. Since there is no shared parameter server,

this launch file will properly populate the kinematics parameters for rviz2. Without

those parameters, you’ll not be able to plan (and the interactive markers will be

missing).

Along the way, I had to fix a few bugs:

I found you might also have to set the DISPLAY variable in your environment if

running headless.

MoveIt2 Without ros2_control

Much of MoveIt2 assumes you are using ros2_control - but I’m not.

I continue to use my alternative framework

robot_controllers.

This is still supported in MoveIt using the simple controller manager

which does no controller switching, but interfaces with the standard

control_msgs actions expected for arms and gripper.

Some Interesting Debugging

Once I had MoveIt2 setup to interact with the robot, I could plan motions and move the arm

without issue, but I was unable to actually get MoveIt2 to control the gripper. It turned out

that the action server implementation in my gripper driver wasn’t entirely correct:

- The driver wasn’t storing the shared pointer to the active goal.

- This caused the goal to go out of scope and be destructed.

In theory this would be an easy issue to debug - but two things threw me off:

- I had been using my teleop node to open and close the gripper without issue - but at

no point was I actually looking at the responses from the action server, it was just

letting things run open loop.

- MoveIt2 reported that the action failed due to “preempted”. This didn’t make much

sense since none of the “preemption” logic in my action server was triggering any

of the logging messages.

I ported this driver to ROS 2 quite some time ago, but now the easy approach if you only

need one action goal active at a time would be to use the simple_actions package. I

did just that later on when calling my grasping perception for the pick and place demo.

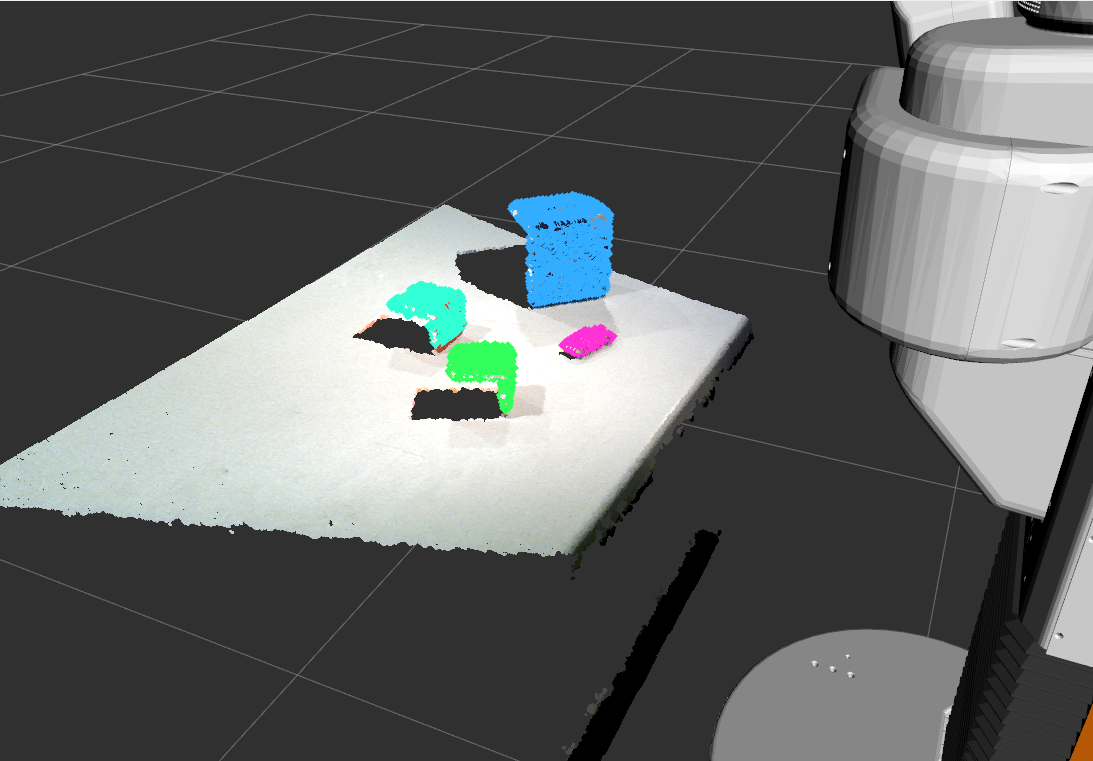

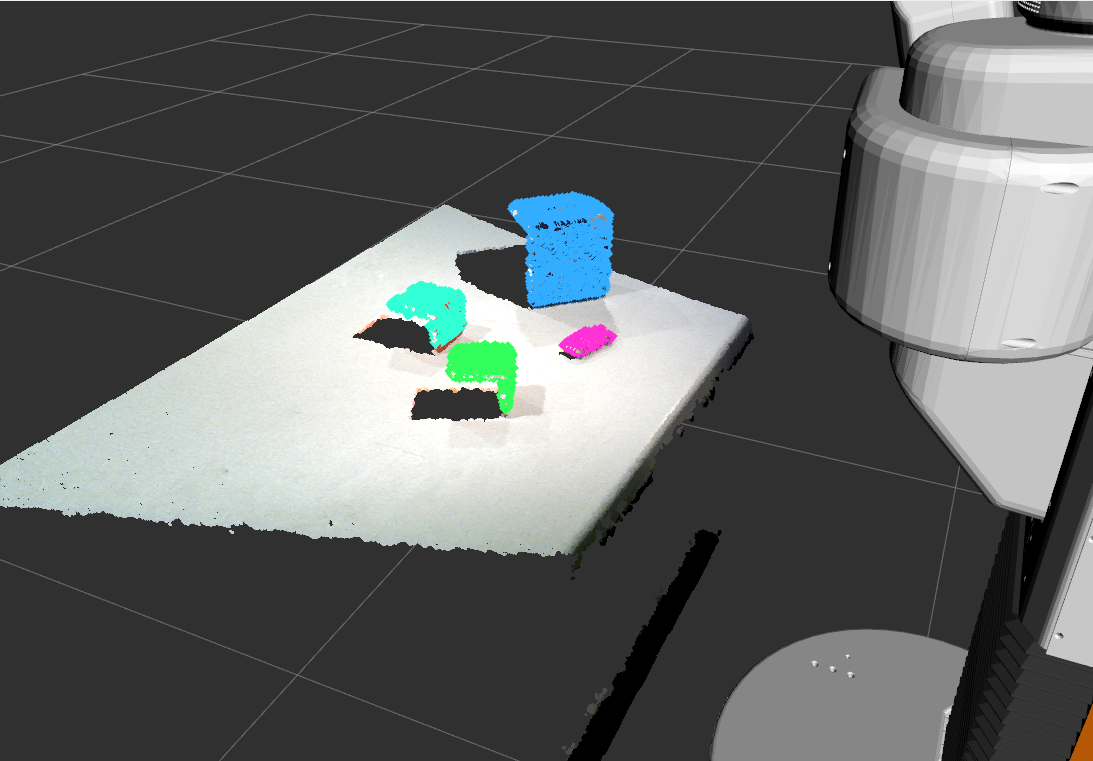

Simple Grasping

Perception is really the weak part of the ROS ecosystem. There are numerous packages

available for controls and planning, but not much for perception. Years ago I created

a package simple_grasping which segments a point cloud into objects and support

surfaces which can then be used for pick and place. Actually porting the code to

ROS 2 was fairly straight forward.

One of the issues I’m seeing in Jazzy that I have not yet resolved is that for large messages

(such as images or point clouds), a reliable publisher connecting to a best effort

subscriber seems not to work - there isn’t any issue with QoS mismatch, but no images get

through. My workaround has been to add QoS overrides to a number of nodes. For instance,

in simple_grasping, I have the following code:

// Would prefer to subscribe to head camera cloud as best effort

rclcpp::QoS points_qos(10);

points_qos.best_effort();

// But due to issues in Jazzy, allow overriding QoS at runtime

rclcpp::SubscriptionOptions sub_opts;

sub_opts.qos_overriding_options = rclcpp::QosOverridingOptions::with_default_policies();

cloud_sub_ = this->create_subscription<sensor_msgs::msg::PointCloud2>(

"/head_camera/depth_registered/points",

points_qos,

std::bind(&BasicGraspingPerception::cloud_callback, this, _1));

std::bind(&BasicGraspingPerception::cloud_callback, this, _1),

sub_opts);

(You can see the full set of changes here)

And then in my launch file, I can override the

Node(

name='basic_grasping_perception_node',

package='simple_grasping',

executable='basic_grasping_perception_node',

parameters=[{

'qos_overrides': {

'/head_camera/depth_registered/points': {

'subscription': {

'reliability': 'reliable',

}

}

},

}],

)

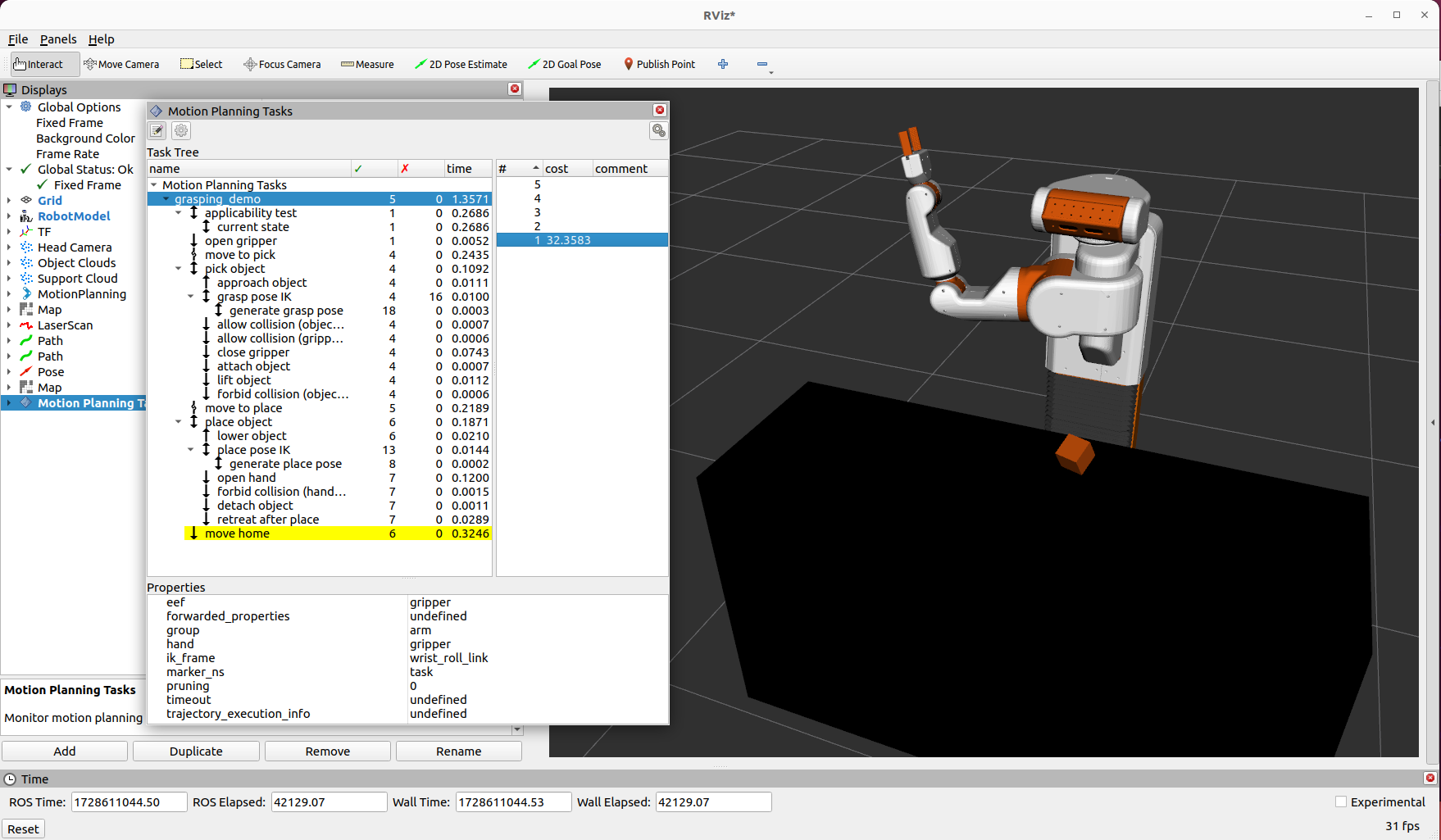

Pick and Place Demo

Not every feature from MoveIt in ROS 1 made it into MoveIt2. One of the big features that

was dropped was the pick and place part of move_group. Users are instructed

to use the MoveIt Task Constructor (MTC).

It seems to be a common theme in ROS 2 that things are designed to be much more capable,

but then are much harder to use and also poorly documented. This theme holds up for pick

and place. I ended up spending more time setting up an MTC pick and place node than

all of the other steps above combined.

There is a single tutorial

that shows how to do pick and place with a Franka arm. The tutorial uses

several simple components inside MTC to do the grasp generation and place

generation.

My implementation in the ubr1_demo

package is based off the tutorial. The files can be roughly described as:

pick_place_task.cpp - Used to create an MTC task for pick and place. It largely follows the

tutorial, with a few minor tweaks:

- Moved the

allowCollision modifications of the planning scene a bit earlier in the pick pipeline

as I was having issues with plans falling due to perception being imperfect and the object to

be grasped being in contact with the table top.

- Switched to using a custom

GenerateGraspsFromMsg grasp generator.

pick_and_place.cpp - Actual node that calls find_objects action from the simple_grasping

server, creates a pick and place MTC task, and then executes the task. This node uses David Lu’s

simple_actions package to call the find_objects action server.generate_grasps_from_msg.cpp - A custom pose generator that uses the grasps created by the

simple_grasping package rather than a hard coded list of rotations.launch/pick_place.launch.py - In order to load configurations (such as kinematics), the launch

file uses MoveItConfigsBuilder to add proper parameters to the node.

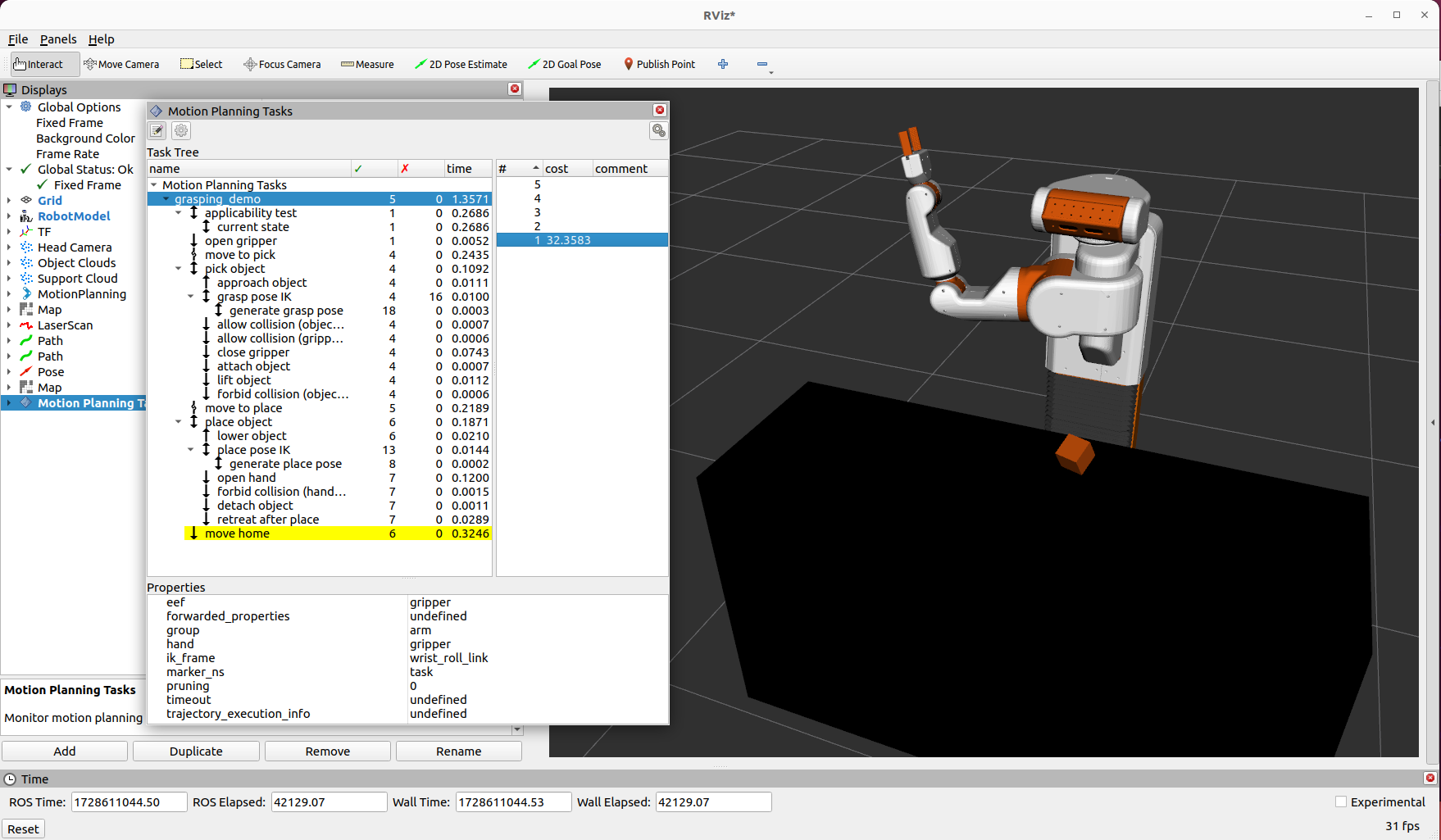

MTC has a nice visualization interface in rviz2 where you can inspect each step in the task. One note

that caused me some difficulty: you need to keep the MTC task in scope or you lose the visualization

in rviz2.

To actually execute the MTC plans, you need to also be running move_group with a modified launch file:

from moveit_configs_utils import MoveItConfigsBuilder

from moveit_configs_utils.launches import generate_move_group_launch

def generate_launch_description():

moveit_config = MoveItConfigsBuilder("ubr1", package_name="ubr1_moveit"). to_moveit_configs()

# Add the following line to load the MTC capability

moveit_config.move_group_capabilities["capabilities"] = ["move_group/ExecuteTaskSolutionCapability"]

return generate_move_group_launch(moveit_config)

The only part of the MTC that I had some issues with was the Connect stage between the ready pose and

the pre-grasp pose. There was some minor noise in the gripper position, which was causing issues. I found

a closed issue where the following was recommended to be added to the .rosconsole/config file:

log4j.logger.ros.moveit_task_constructor.core.Connect=DEBUG

log4j.logger.ros.moveit_task_constructor.core.Connecting=DEBUG

Next Steps

Now that I have all the building blocks working for the UBR-1 on ROS 2, I want to create a more compelling demo.

Stay tuned.

14 Oct 2024

robots

ros

ros2

This is another blog post that has sat in draft for 2+ years. I’m pushing it out ahead

of ROSCon this year where I’ll be talking about migrating the UBR-1 mobile manipulator

to ROS2.

For a long time I’ve wanted a better open-sourced local controller for ROS navigation.

It’s finally implemented, and has been running on the robot fleet at Cobalt Robotics

for about two years now. The full code of the graceful_controller is available on

GitHub.

Motivation

There are a number of local controllers in the ROS1 and ROS2 navigation stacks, but most

of them are based on either the

Dynamic Window Approach,

Trajectory Rollout, or

optimization approaches such as

Time Elastic Bands.

Both DWA and Trajectory Rollout suffer from a series of challenges:

- All of these controllers have many parameters, but the ones for DWA are especially

difficult to tune because they are highly interrelated (for instance the goal, path,

and obstacle biases). This is marginally improved by the DWB controller.

- The

sim_time parameter is especially difficult to tune for all operating

environments. Too long of a simulation time will make it difficult to enter tight

spaces (except at extremely slow speeds), while too low of a value will cause

instability

- DWA requires that users either have very good odometry response, or falsify their

acceleration limits to actually get robots moving. This is a reason so many robots

with low acceleration limits still use the older Trajectory Rollout controller.

- Even if these acceleration limits are defined properly, the way the forward simulations

work are pretty crufty.

For this reason, many people replace the default controllers - especially corporate

users of ROS. Sadly most of these improved controllers

don’t get released into the open source.

Goals

The goals for the new controller were quite simple:

- Accurately model the robot limitations (acceleration, deceleration, etc).

- Accurately forward simulate where the robot will end up.

- Produce repeatable results that look smooth and intelligent.

Underlying Algorithms

A number of years ago at Fetch Robotics, I wrote a package for our robots to autonomously

connect to their charge dock. Sometime later, Fetch open sourced the package under an LGPL

license. I used this same underlying control law for the first part of the new controller.

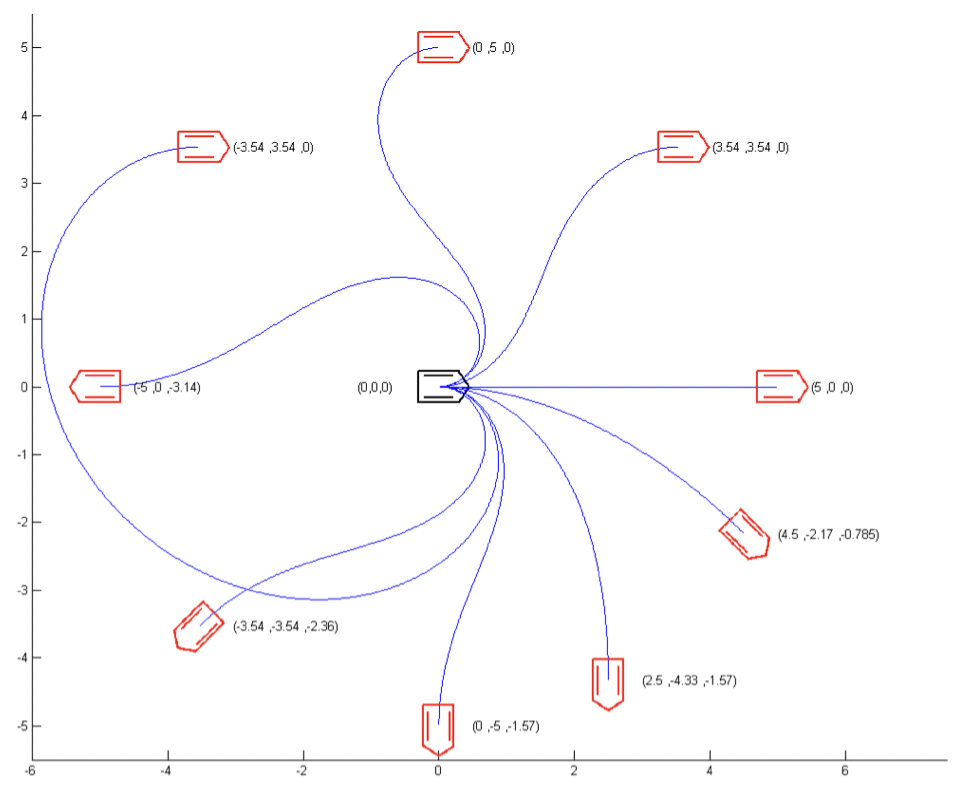

This underlying control law is based on a paper called A Smooth Control Law for Graceful Motion of Differential Wheeled Mobile Robots in 2D Environments by Park and Kuipers, which was presented at ICRA 2011.

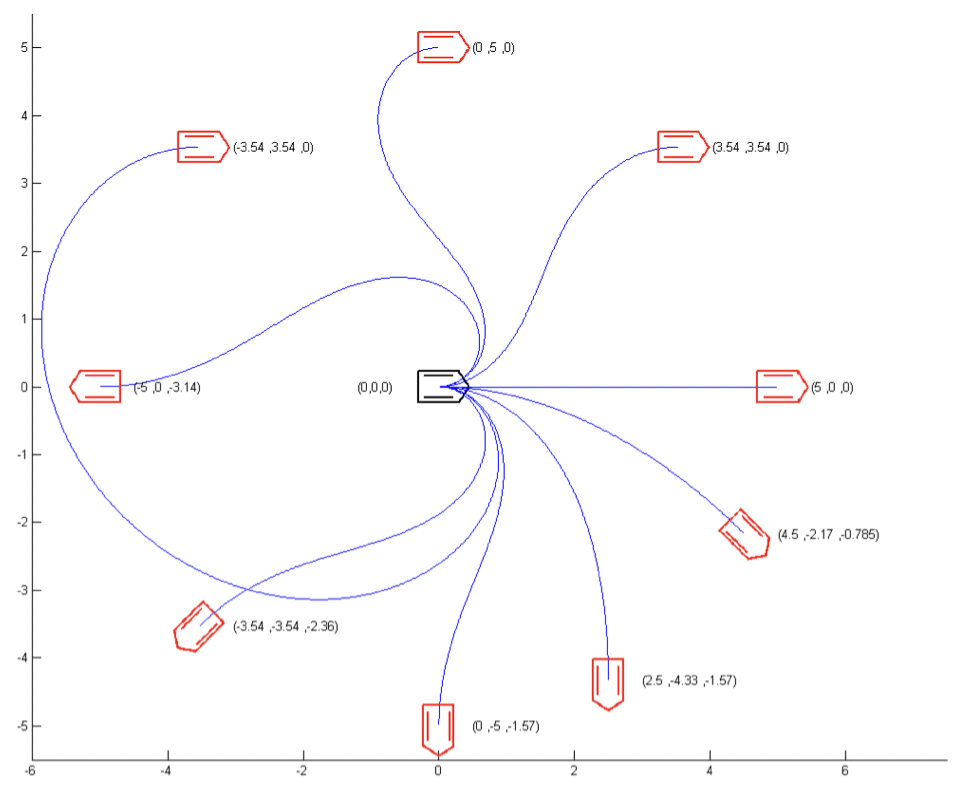

This control law implements a closed-form solution to find a kinematically feasible linear

and angular velocity for a differential drive robot to approach a pose, based on just a few

parameters that are actually fairly robot-independent. The image below (from the original paper)

shows some example trajectories:

The underlying control law has a number of nice features:

- It automatically slows down for highly curved paths.

- It automatically slows to zero as the target pose is reached.

The underlying control law really only works for approaching a single pose - which we call

the target pose. This is exactly what docking with a charger entails. For

something like navigation, we typically need to approach a series of poses in succession

(since following the control law directly towards the final pose in the path is usually not

collision free). Section IV.B of the paper describes a fairly complex approach to do this,

however, our controller takes a simpler approach.

Our controller attempts to use as the target pose the farthest pose in the

path which is both A) less than some maximum lookahead distance away from

the robot, and B) reachable with our current control law parameters without collision.

The reachability portion is implemented through a forward simulation. Unlike DWA, the

forward simulation is not time-based, but rather terminates when we have reached the

target pose. Additionally, since the target pose is the farthest that we

have collision checked, we will actually forward simulate based on stopping at the target

pose.

The new controller is built out of two ROS packages. The first package includes the

underlying control law, which is licensed under the LGPL. The second package implements

a ROS1 or ROS2 plugin for navigation, and is licensed under BSD since it is loosely

based on the DWA codebase.

Additional Features

While the basic control law with target pose simulation works fairly well, there are

a number of additional improvements implemented:

- Optional initial rotation - since the control law can cause the robot to take

large sweeping arcs when the target pose is behind or to the side of the robot, we

implement an optional initial in-place rotation that points the robot towards the

target pose. If in-place rotation is not possible (due to collision), the regular

control law will be applied.

- Optional final rotation - similarly, for the final pose, it is possibly preferred

to approach the final pose without considering the heading of the pose. Once at the

final pose, the robot can rotate to the final goal orientation.

- Footprint Scaling at Speed - this feature inflates the robot footprint

at higher speeds. This allows the robot to move quickly in more open spaces but

naturally slow down when the environment gets tighter.

- Orientation filter - can be used to smooth out the orientations of the

poses in the global plan.

ROS2 Support

As it turns out, porting to ROS2 was fairly straight forward. Improvements to the controller

API in ROS2 mean that the following features are far easier to implement:

- The controller command velocity generation API natively passes the current robot speed,

which negates the need for additional boilerplate that existed in the ROS1 controller

to listen to the odometry feedback.

- The notion of variable speed limits is native to Navigation2, and the controller has

an API for it.

- The goal checking is split out to a different plugin.

Future Work

There have been quite a few developments since I first started working on this controller

in early 2021.

The Nav2 project added the MPPI controller, which addresses many of my concerns with

both DWA and Trajectory Rollout. My only issue with this controller is that it uses

special SSE/NEON instructions for parallelization, and so it doesn’t work on all CPUs

(in particular, I found it wouldn’t work on the very low end Celeron processor in

my FireBot).

An alternate implementation of the control law was also added to Nav2 as part of the

docking server project. It’s not quite ready to be a full controller yet, but at some

point I expect that version to supersede the ROS 2 implementation of my graceful controller

package.

Kudos

This work was supported in part by Cobalt Robotics.

01 Oct 2024

robots

This is not investment advice.

This probably shouldn’t be construed as advice of any kind.

Robotics Startups of the Past and Present

Over the past year or two, I’ve had a number of conversations that were basically

“why is it so much harder to get funding for robotics stuff these days?”.

While you could blame macroeconomics, lack of LP liquidity, and higher interest

rates, I think this actually largely comes down to the fact that there haven’t

been all that many really big exits in robotics. Robotics founders tend to be

very good at building cool tech solutions, but not so good at actually selling

and monetizing that technology.

When Kiva Systems sold to Amazon in 2012 for $775M, there weren’t all that many robotics

companies out there - so it looked like maybe this was a space that would generate

great returns. Now, a little over 10 years later, there have been a handful of exits

in the several-hundred-million-USD range (Universal Robots, 6Rivers, MIR, Fetch Robotics, Clearpath Robotics / Otto),

but most of those raised significantly more capital than Kiva Systems did and all of them

exited for a lower price than Kiva. None of them exited at the same kind of crazy

valuation that Google bought Nest for in 2014 ($3.2B - this was another “hardware”

exit that drove interest in robotics around that time).

Universal Robots and Mobile Industrial Robots (MIR) are setup more like traditional robot

manufacturers - they build robots, sell them at relatively low margins, and use an extensive

network of integrators who actually install and program the robots. Notably, both companies

come out of the EU - I feel that most US-based investors who do seed/A rounds would not

fund this sort of company today.

What those US-based investors do fund looks a bit different. These companies are largely

founded by robotics experts. They are vertically integrated, often manufacturing their own

robots in-house, building their own mapping, localization, navigation, and cloud-based

fleet management, and often setting up their own direct sales channels. Many of those

direct sales channels focus on “recurring revenue” by offering robots as a service.

There are numerous examples.

One outlier to this model though would be Locus Robotics. They weren’t founded by robotics

experts - they were founded by domain experts in third party logistics (3PL). These domain

experts were also a built-in customer - as an early customer of Kiva Systems, they had a

real need for a new robotics solution after the Kiva acquisition

And hey, guess what, Locus is doing pretty well.

Robotics is not SAAS

What surprises me is there are still investment firms trying this same playbook today.

They go out and fund a robotics company founded entirely by roboticsists (and for some

reason, many seem to think there are bonus points if every one of the founders is a

Robotics PhD and has never held a job outside of academia).

Some of these firms claim that RaaS is the new SaaS: spoiler alert - it’s probably not!

Robotics is capital intensive. Especially if you are buying all these robots, keeping them

on the books, and then renting them out with a 1-2 year payback window. Even worse:

robots age a lot worse than servers.

The really tricky thing about “robotics startups” is that the robot is JUST ONE PART

of the business / product.

It’s really just the starting point - and you will eventually end up expending

far more effort on the rest of the product: the software you need for deployment and

monitoring, the sales organization, the integration teams.

Finally, while many folks will tell you that “hardware is hard” - the bigger problem is that

hardware is SLOW. Supply chains have improved from the days of the pandemic, but

they are still slow, inefficient and generally a bit of a hot mess. So when you do suddenly

land all those orders - good luck getting the parts you need to actually fulfill the order

quickly.

/rant

Robotics Ecosystems

And now we get to something maybe actually useful to somebody. If our current generation of

robotic startups are fully vertically integrated and founded solely by robotics people,

what does the next generation look like?

Much of the really interesting stuff with computers and the internet started to happen when

people who weren’t just computer nerds were able to build companies in the space. I

think the same thing could happen for robotics.

These next generation robotics companies will have a founding team with domain experts

in whatever problem the robot is solving. These companies probably won’t even be called

robotics companies. They’ll be healthcare automation startups, or 3PL startups, etc.

They probably won’t be fully vertically integrated, instead choosing to use more off

the shelf hardware and software components.

We already see some of this happening today - in the early days of the RoboBusiness

conference, all sorts of “robot” companies exhibited - today, those robot companies

put much more emphasis in the tradeshows for their industry - show likes ProMat

or Modex for warehouse logistics

providers. The majority of exhibitors at robotics conferences are now selling

(largely hardware) components to robot companies.

With an ecosystem of more focused next-generation robotics companies, these startups

won’t have to build everything in-house. Companies like

InOrbit,

Formant and

Foxglove

exist today and offer a slice of tools needed to build a robotics solution. You can buy

robots from UR and MIR. The ROS 2 variant of navigation, Nav2, and arm planning (MoveIt2)

are already being used in

commercial products with far less customization than was needed in ROS 1 - and their

respective supporting companies (Open Navigation LLC and

PickNik) exist to help next-generation

robotics companies leverage these open source projects.

The Actionable Stuff

Numerous people have asked for startup advice over the years - I have often, wrongly,

focused on very narrow things (don’t go cheap on lawyers, etc).

I’m not sure I’ll ever do another robotics company, but here is what my dream

founder team would look like for a future robotics startup:

- CEO - A domain expert in whatever industry you are selling into. Significant

product experience. Should be able to sell - the CEO’s industry connections

will basically take the place of hiring a sales team initially.

- CTO - Robotics expert, with product experience. In order to properly lead

the (hopefully relatively small) engineering organization, and integration

of Off-The-Shelf (OTS) components and vendors, the CTO will need a solid grasp

of hardware, web/enterprise software, and any other product specific technologies.

Put that team together and then find a real product need - the simpler the better.

Robotics people love to over-complicate things. Do you really need a mobile base?

Do you really need an arm? Or is there some simpler automation solution that you

should be tackling?