Robot Calibration for ROS

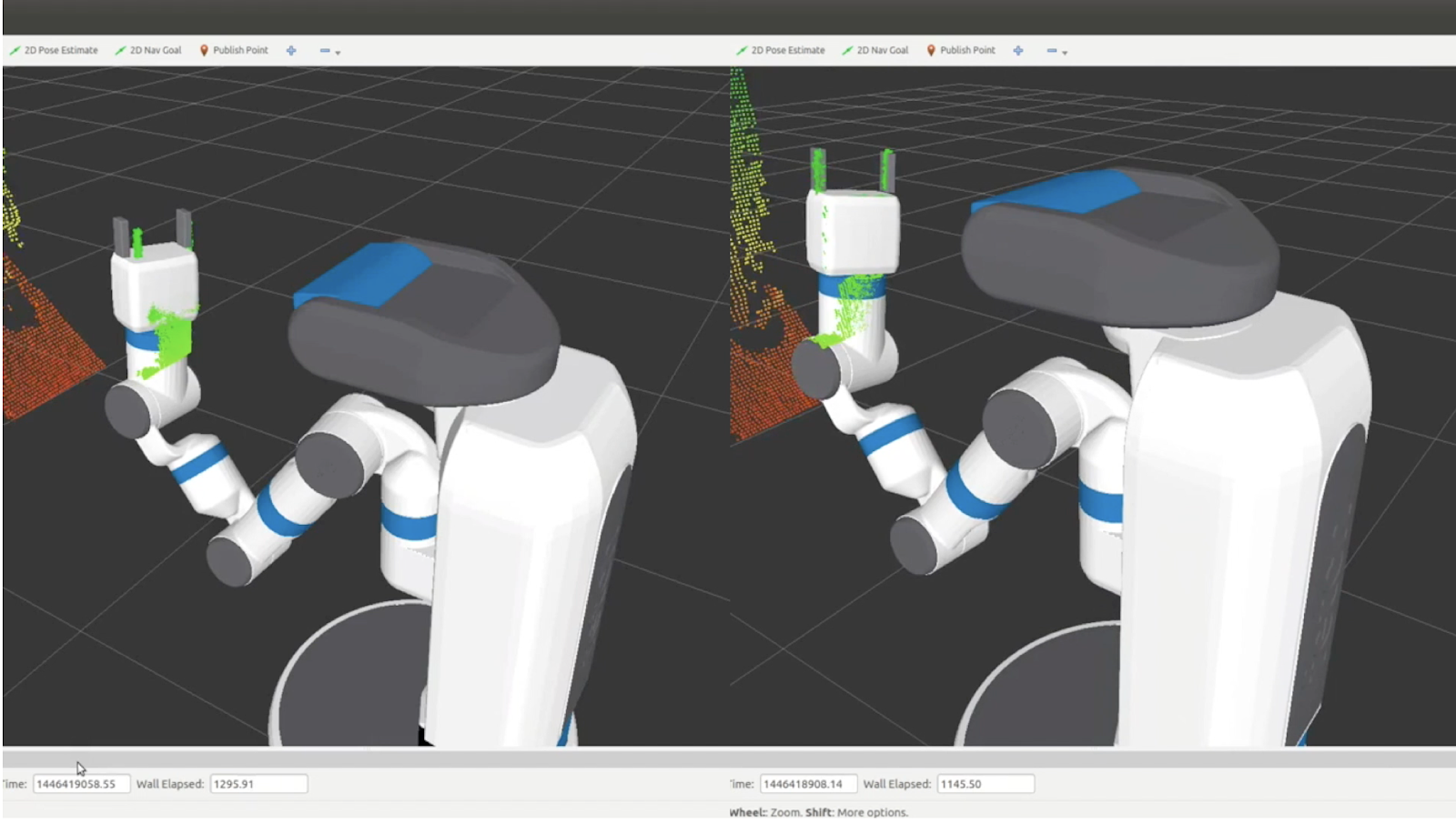

09 Apr 2020calibration national-robotics-week robots ros  Uncalibrated vs. Calibrated

Uncalibrated vs. CalibratedCalibration is an essential step in most robotics applications. Robots have many things that need to be calibrated:

- Camera intrinsics - basically determining the parameters for the pinhole camera model. On some RGBD (3d) cameras, this also involves estimating other parameters using in their projections. This is usually handled by exporting YAML files that are loaded by the drivers and broadcast on the device's camera_info topic.

- Camera extrinsics - where the camera is located. This often involves updating the URDF to properly place the camera frame.

- Joint offsets - small errors in the zero position of a joint can cause huge displacement in where the arm actually ends up. This is usually handled by a "calibration_rising" flag in the URDF.

- Wheel rollout - for good odometry, you need to know how far you really have travelled. If your wheels wear down over time, that has to be taken into account.

- Track width - on differential-drive robots, the distance between in your drive wheels is an essential value to know for good odometry when turning.

- IMU - when fusing wheel-based odometry with a gyro, you want to make sure that the scale of the gyro values is correct. The gyro bias is usually estimated online by the drivers, rather than given a one-time calibration. Magnetometers in an IMU also need to be calibrated.

Evolution of ROS Calibration Packages

There are actually quite a few packages out there for calibrating ROS-based robots. The first package was probably pr2_calibration developed at Willow Garage. While the code inside this package isn't all that well documented, there is a paper describing the details of how it works: Calibrating a multi-arm multi-sensor robot: A Bundle Adjustment Approach.

In basic terms pr2_calibration works by putting checkerboards in the robots grippers, moving the arms and head to a large number of poses, and then estimating various offsets which minimize the reprojection errors through the two different chains (we can estimate where the checkerboard points are through its connection with the arm versus what the camera sees). Nearly all of the available calibration packages today rely on similar strategies.

One of the earliest robot-agnostic packages would be calibration. One of my last projects at Willow Garage before joining their hardware development team was to make pr2_calibration generic, the result of this effort is the calibration package. The downside of both this package and pr2_calibration is that they are horribly slow. For the PR2, we needed many, many samples - getting all those samples often took 25 minutes or more. The optimizer that ran over the data was also slow - adding another 20 minutes. Sadly, even after 45 minutes, the calibration failed quite often. At the peak of Willow Garage, when we often had 20+ summer interns in addition to our large staff, typically only 2-3 of our 10 robots were calibrated well enough to actually use for grasping.

After Willow Garage, I tried a new approach using Google's Ceres solver to rebuild a new calibration system. The result was the open source robot_calibration package. This package is used today on the Fetch robot and others.

What robot_calibration Can Do

The robot_calibration package is really intended be an all-inclusive calibration system. Currently, it mainly supports 3d sensors. It does take some time to setup for each robot since the system is so broad - I'm hoping to eventually create a wizard/GUI like the MoveIt Setup Assistant to handle this.

There are two basic steps to calibrating any robot with robot_calibration: first we capture a set of data which mainly includes point clouds from our sensors, joint_states data of our robot pose, and some TF data. Then we do the actual calibration step by running that data through our optimizer to generate corrections to our URDF, and also possibly our camera intrinsics.

One of the major benefits of the system is the reliability and speed. On the Fetch robot, we only needed to capture 100 poses of the arm/head to calibrate the system. This takes only 8 minutes, and the calibration step typically takes less than a minute. One of my interns, Niharika Arora, ran a series of experiments in which we reduce the number of poses down to 25, meaning that capture and calibration took only three minutes - with a less than 1% failure rate. Niharika gave a talk on robot_calibration at ROSCon 2016 and you see the video here. We also put together a paper (that was sadly not accepted to ICRA 2016) which contains more details on those experiments and how the system works [PDF].

In addition to the standard checkerboard targets, robot_calibration also works with LED-based calibration targets. The four LEDs in the gripper flash a pattern allowing the robot to automatically register the location of the gripper:

LED-based calibration target.

LED-based calibration target.

One of the coolest features of robot_calibration is that it is very accurate at determining joint zero angles. Because of this, we did not need fancy jigs or precision machined endstops to set the zero positions of the arm. Technicians can just eye the zero angle and then let calibration do the rest.

There is quite a bit of documentation in the README for robot_calibration.

Alternatives

I fully realize that robot_calibration isn't for everyone. If you've got an industrial arm that requires no calibration and just want to align a single sensor to it, there are probably simpler options.